Obviously, when we all first saw ChatGPT write a full lesson plan, that was pretty cool. But, that...

6 Steps to Responsibly Choose an AI Tool for Your School

If you’re a school leader you may be having strong feelings about AI right now. First, it snuck into conversations through students cheating, and how to stop it. Then, other ethical issues got added to the pile: data privacy, copyright, and climate change. Just as it was starting to look very grim, claims about AI being the future of schools became hard to ignore. Maybe it can help your teachers save time? Maybe avoiding it does leave your students unprepared for a world that will have embraced it by the time they graduate?

If you’re a school leader you may be having strong feelings about AI right now. First, it snuck into conversations through students cheating, and how to stop it. Then, other ethical issues got added to the pile: data privacy, copyright, and climate change. Just as it was starting to look very grim, claims about AI being the future of schools became hard to ignore. Maybe it can help your teachers save time? Maybe avoiding it does leave your students unprepared for a world that will have embraced it by the time they graduate?

So now you’re on the hunt. What AI tool is right for your school? You’ve created an AI committee. They’ve researched what’s out there.

What’s out there? A lot of AI tools that all promise very similar things. How can they possibly be compared?

If you're stuck in that spot, this article is for you. What are the important considerations to think about when choosing an AI tool for your school? And how can they be properly considered?

Right now, the members of the AI committee typically read the website and briefly try out the product, scanning for red flags. If everything looks good (and it usually does, because that's exactly what AI excels at) then the main thing affecting their decision may be marketing, which has nothing to do with the quality of the product. And with AI, the devil is always in the details. In Silicon Valley, the prevailing wisdom is that the more a tool can do for you, the more frictionless it is, the better. So these tools are designed to look seamless, and that makes the surface-level scan especially unreliable. We need a better checklist about what really matters when adopting an AI tool for a school. So here's a starting point:

For students

Quality of content

Start with the content itself. If the tool generates reading passages, plug a few into the Hemingway App and check whether the reading level matches what's claimed. If it generates questions, look at whether they ALL test recall or if it’s able to generate questions that test something deeper. Quality checks like these take just a few minutes and can reveal a lot, but they have to go one step beyond a “does this look good?” sniff test, and require some close examination.

Breaking the AI

Then, try to break it. Can a student get around the guardrails? Can they get the AI to just give them the answer? The best way to find out is to put a group of students on your AI committee, or spend a class period teaching students about AI and then let them try to see if they can get around the guardrails just by prompting, on purpose. This will be fun, educational, and they will find the cracks faster than any adult will. Once one student has found a way to break it, ask: how reliable is this method? What other methods are there? Remember that AI is probabilistic, so it’s not going to behave the same way every time.

For teachers

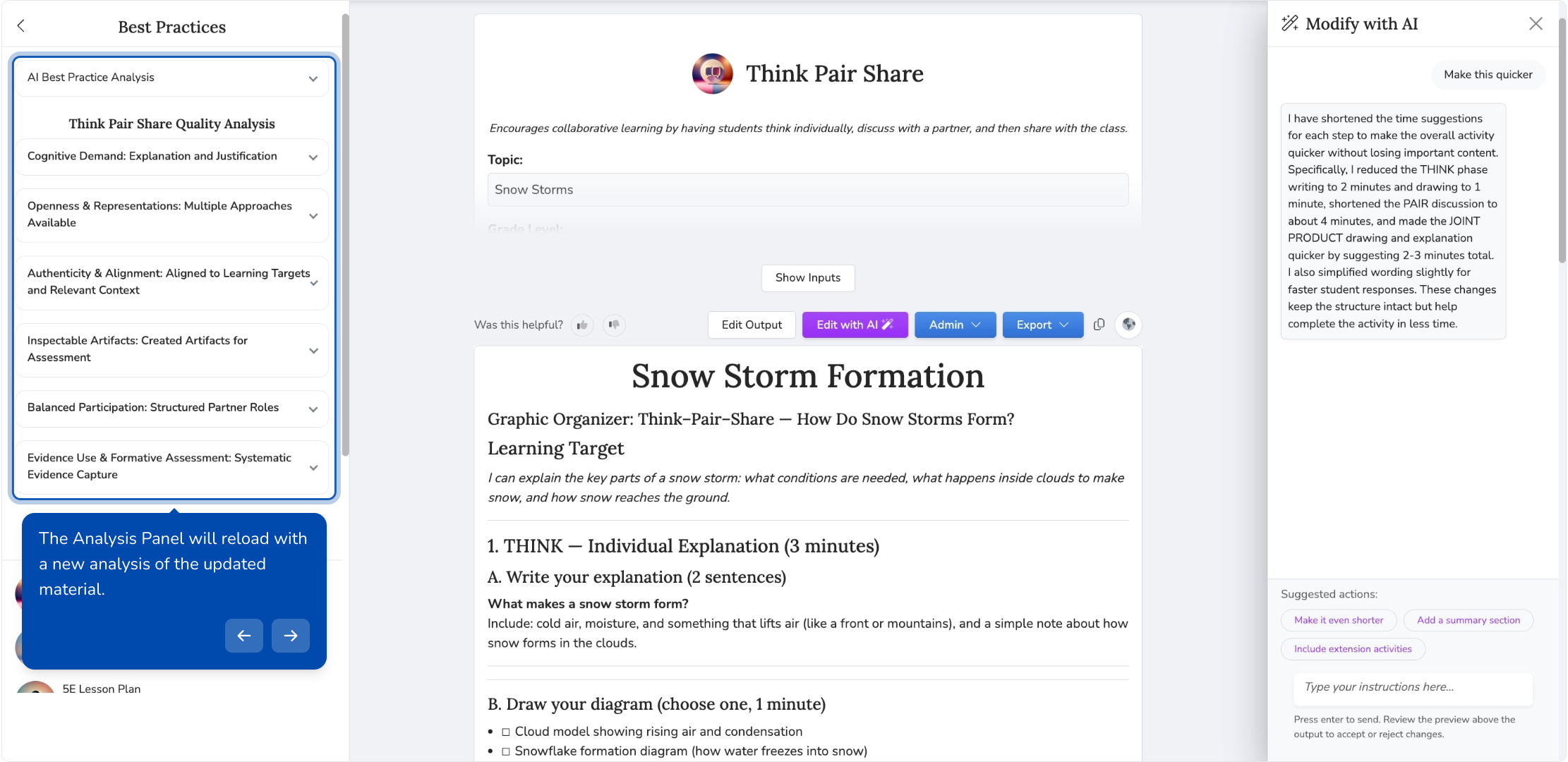

Pedagogical transparency

Look at what happens after the AI generates something. When a tool produces a question, a rubric, or a lesson plan, does it show the teacher why it made the choices it did? Or is the teacher just expected to glance at the output and hit "use"? This is the difference between a tool that builds pedagogical judgment over time and one that tries to replace it. A good AI tool should give teachers ways to evaluate and refine what it produces, not just accept or reject it.

Professional Collaboration

Does the tool assume every teacher is working alone? Or does it give teachers ways to share what they've created, compare approaches, and have deeper conversations about why they're using certain materials at certain points in the curriculum? The best tools treat teaching as a collective practice, not a solo one.

For the school or district

What happens when you stop paying?

Ask this one directly. If your teachers have spent years building materials inside a platform, can they take those materials with them? Content portability may not be the flashiest feature on a sales demo, but it tells you a lot about whether the company sees you as a partner or a captive audience.

How is it improving?

Is the company iterating based on learning science research, or just on user engagement metrics? These two paths lead to very different products over time. A tool optimized for engagement may get slicker and stickier. But that may lead to lower quality teaching and learning, rather than higher. There is no reason AI tools would not be built on the latest research on learning sciences and AI methods, so set your standards high.

Conclusion

None of these checks require technical expertise, but they do require slowing down. The tools that look the most polished in a demo may be the ones most worth scrutinizing, because polish is what AI is best at producing.

Your AI committee doesn't need to become a group of AI experts. But they do need permission to be opinionated, specific, and a little bit stubborn, not just about data privacy, about what counts as quality. The six checks above won't give you a perfect score for every tool. But they'll get you past the surface, which is where the important differences live.

If you want to see what this looks like in practice, QuestionWell was built with exactly these principles in mind. We'd love to show you.